My son writes stories. I have been building software for a long time. The gap between "kid has a notebook full of characters" and "kid holds a printed comic of his own story" used to require a publisher, an illustrator, and months. In April 2026 I closed it in a weekend.

Friday. Saturday. Sunday. By Sunday evening my son was holding an illustrated version of his own handwritten letter. He has been reading it at bedtime since. So have I. By Monday it was live at talealbum.com.

I called it Tale Album. You put in text or a scan of handwriting. You get out an illustrated picture book: cover, pages, page numbers, dedication, downloadable PDF. It is live now at talealbum.com as a closed-invite beta.

This is a field note about what it is, how it works, and what I actually learned from building it. The lesson is not technical. The lesson is: the tools are already here. What is scarce is intent.

The problem

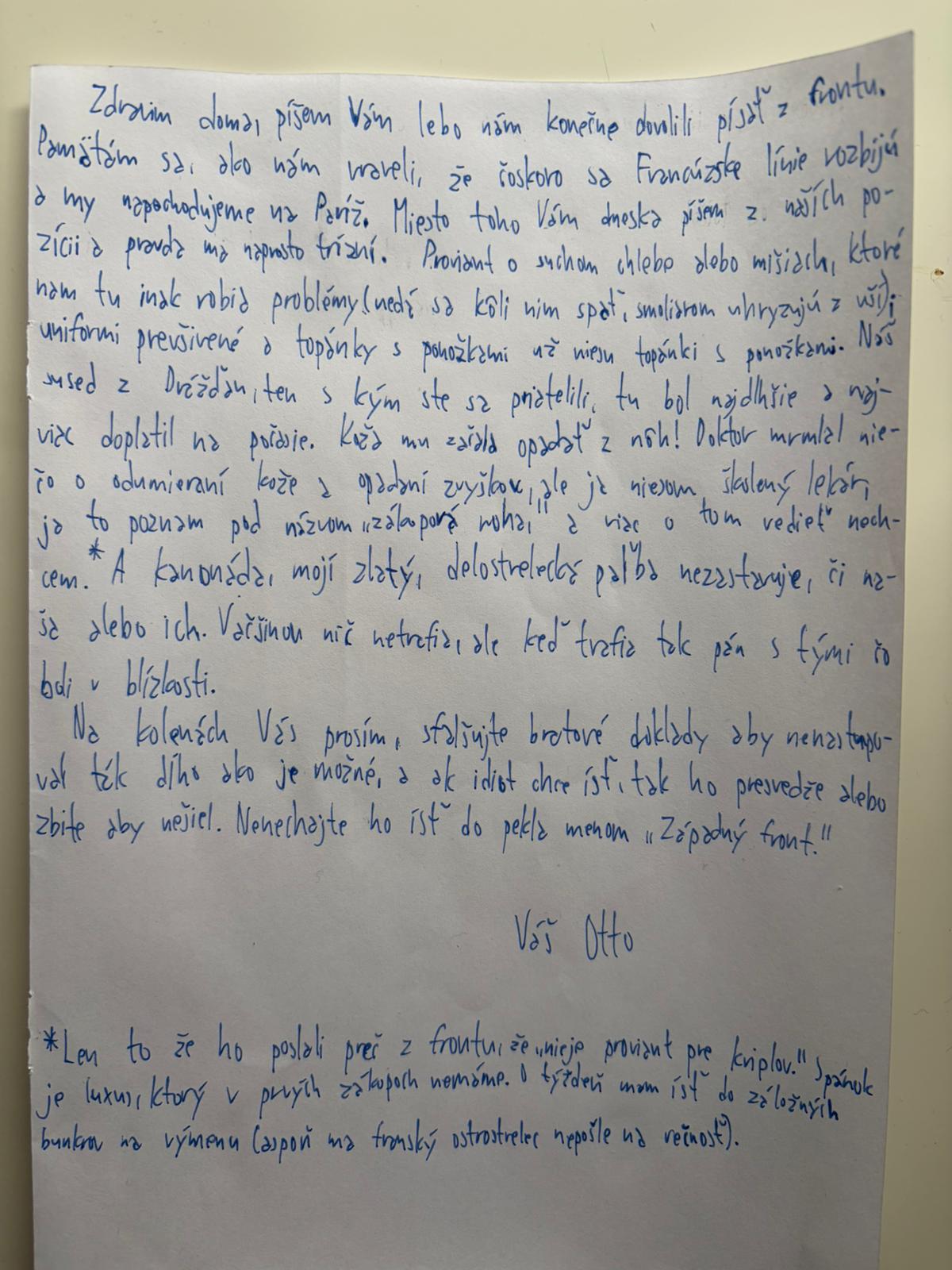

My son writes in Slovak. His stories run two to five pages of handwriting. Cute on paper, but paper stays on the desk.

The market in 2026: Wonderbly drops your kid's name into a pre-written story. Storybook AI generates from a prompt. Drawtopia turns a kid's drawing into a styled illustration. Google's Gemini Storybook does English text in, English book out. Every one of them assumes the kid is the character, not the author. None of them take a Slovak page of handwriting and return Slovak comic pages with the original voice intact. That was the constraint that mattered to me.

I did not want a SaaS. I did not want to upload my kid's handwriting to a third party. I wanted a tool that runs on my machine, uses my API keys, keeps artifacts on my disk, and produces a book I would hand to him in ten years.

What I tried first (and why it broke)

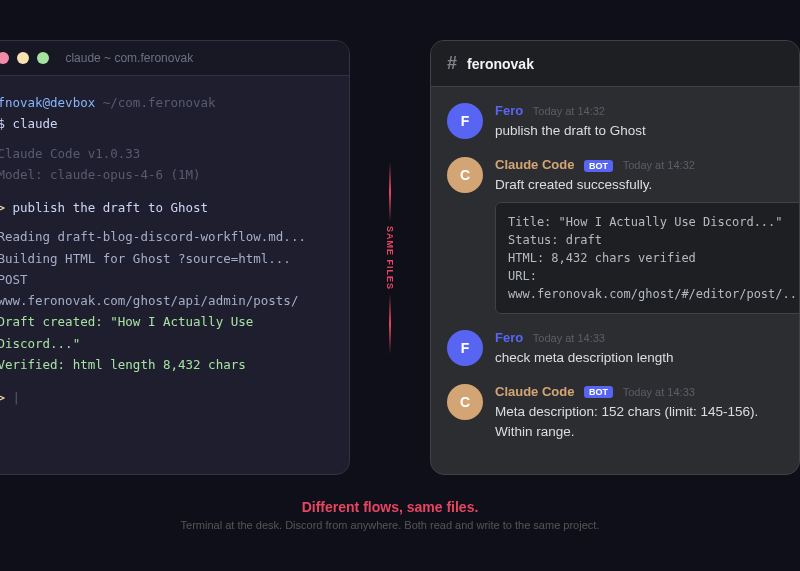

Friday evening: a CLI-only pipeline. Four stages - transcribe, propose, script, render. Feed a file in, wait, get text out. That got me through a dry run with zero images. There was no way to iterate on any stage without re-running the whole thing. Then a Next.js web UI with a stage-by-stage stepper. Each stage has a panel. Each stage has an "approve and continue" and a "regenerate". Every intermediate artifact lands on disk under runs/<run-id>/, so nothing is recomputed unless I ask.

Saturday: image generation. Nano Banana for panels, separate call for the cover, per-stage provider routing. The first rendered panel becomes a reference for subsequent panels so the main character stays on-model.

Sunday: composition, layout, polish. The layout was the thing that broke hardest. Panels were getting cropped, placements were specified in pixels, text was colliding with image boundaries. I replaced pixel placements with a 12-column grid and enforced safe-area margins. That was the afternoon.

What actually works now

The pipeline has seven stages. Each produces an artifact on disk. Later stages read earlier artifacts. A run can be resumed, re-transcribed, or re-rendered without redoing earlier work.

- Input. Text or a scan (JPG, PNG, PDF) is copied into the run directory.

- Transcription. A vision LLM reads the scan and outputs structured JSON + Markdown, with per-paragraph confidence notes. OpenRouter + Gemini Flash Lite by default. Anthropic for PDFs (Gemini does not accept them).

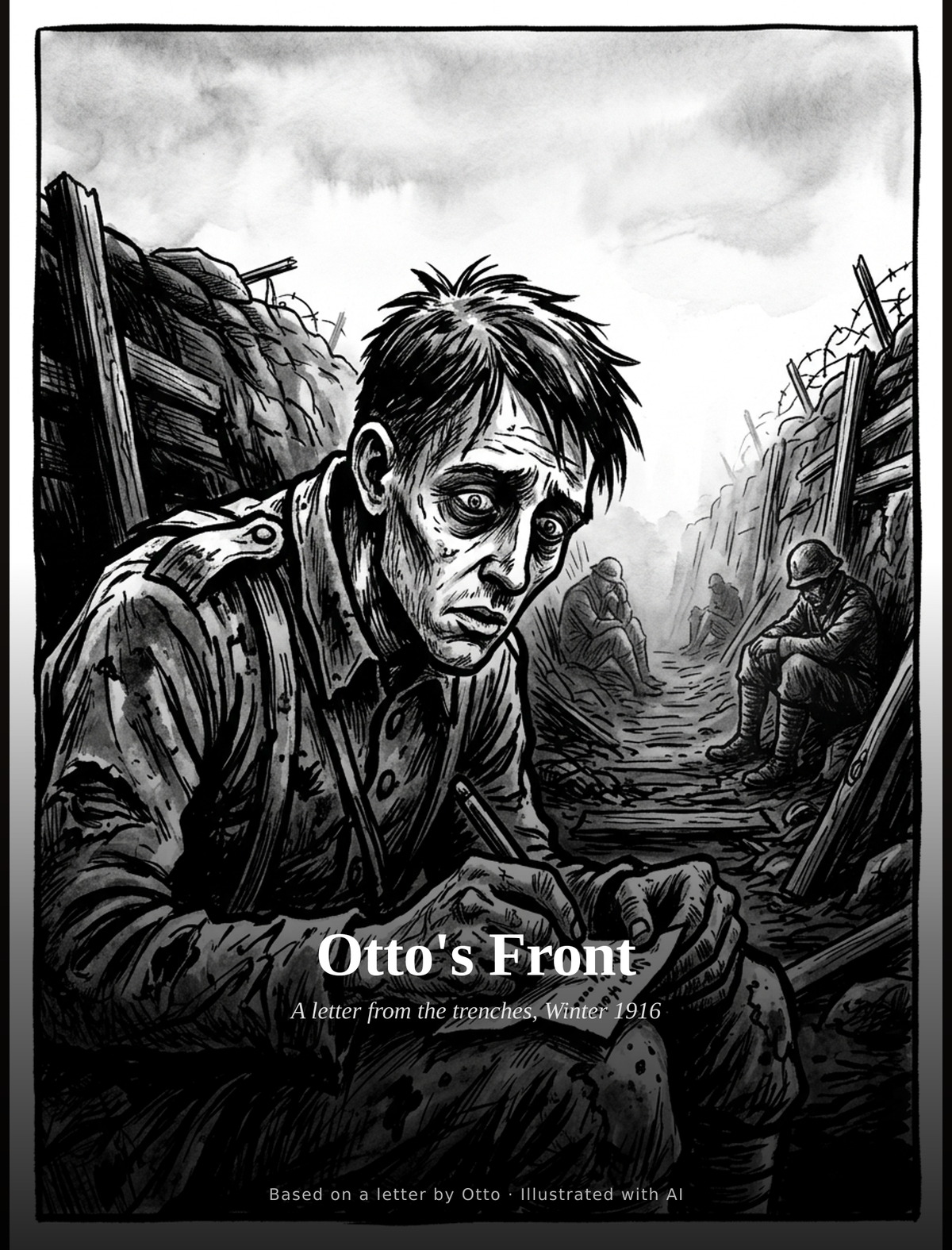

- Proposal. A text LLM drafts a comic proposal: page count, panel count per page, visual style, character list, setting, mood. Output includes three reference artist names so the image model has an aesthetic anchor. A WWI letter returns Jacques Tardi, Kaethe Kollwitz, Joe Sacco. A 2001 retelling returns Moebius, Enki Bilal, Tanino Liberatore. The LLM reads the source tone and picks.

- Script. A text LLM writes the panel-by-panel script. Each panel gets a visual description, dialogue, camera instruction, and mood note. This is the heaviest text stage.

- Pages. An image model renders one image per panel. Nano Banana 2 via OpenRouter. The first rendered panel becomes a reference for subsequent panels so the main character stays on-model.

- Cover. A dedicated image-gen call produces the cover. Separate from panels because a cover is a different craft: safe zones, iconic poses, title legibility.

- Composition. Server-side only. Sharp and SVG stitch the rendered panels into page layouts with title banner, page numbers, byline, dedication. PDF export via pdf-lib. Last page is an archival colophon: original transcribed text, models used, costs, date.

Per-stage provider routing happens in .env. Swapping the transcribe stage from Gemini to Claude is a variable change. Cost logging per-call. A cumulative-spend warning stops runaway runs at a configured threshold.

What it does now

Eight real runs to date. Inputs so far:

- A short retelling of 2001: A Space Odyssey. Twelve pages, Moebius-inspired European bande dessinee style.

- A handwritten WWI letter from the Western Front, 1916. Three pages, Jacques-Tardi-style gritty expressionist ink. The transcription picked up the Slovak dialect and retained the original voice.

- A sci-fi piece about a teen trial in a post-apocalyptic vault, written by a 15-year-old. Five pages, high-contrast neo-noir.

- My son's stories.

The outputs live as PDFs I can AirDrop to my phone and read with him at bedtime.

Five things I learned

One. Pipelines beat prompts. Seven stages with disk-cached artifacts let me iterate. A single god-prompt that generates a comic from text in one call cannot be debugged, cannot be iterated on any part, and produces slop you either accept or trash. Separate the stages. Cache everything. Let the user approve each one.

Two. The expensive stage gets its own architecture. Five of the seven stages are text and cheap. One is image generation and expensive. That one stage gets its own error handling, its own retry, its own cost warnings, its own separate path for the cover because cover is a different craft than interior panels.

Three. Visual consistency comes from reference images, not better prompts. The cover gets generated using panel 1·1 as the reference image. Per-character portraits get pre-rendered and attached to every panel that character appears in. About 3.6 cents per portrait per panel. Cross-book likeness for the price of a coffee. Asking the model "please make the character look the same" with words alone does not work. Asking with pictures does.

Four. Per-panel location anchors scene continuity. Without it, a six-panel conversation in a kitchen drifts into a forest and a hallway because the model picks whichever vibe matches the close-up. Adding one location field to the panel schema and forwarding it as a Location: line to image-gen fixes it. The schema fix beat any prompt-engineering attempt.

Five. Filesystem is fine as a database. No SQL, no ORM, no migrations. Every artifact lives under runs/<userId>/<runId>/. State changes are sentinel files: an empty .archived hides a run, an empty .share-revoked kills a public link. The allowlist is a text file the operator edits with echo. One Hetzner CX22 at €4.20/month runs the whole thing for a hundred invited users. The tools have caught up to the point where personal-scale software does not need cloud-scale infrastructure to feel real.

The bottleneck in building something real is no longer capability. It is intent.

If you want to test it

If you have a kid's story - typed, pasted, or a photo of a handwritten page - message me this week. I will add you to the talealbum.com invite list. A handful a week, no catch, no payment, no email list.

What you get: a printed-quality PDF in about five minutes. The colophon includes the original transcribed text, so the book remembers where it came from. There is also a private share URL you can send to grandma without making her install anything - read-only view, no account needed on her end.

If you run a story, send me the PDF when it is done. I want to see what comes out. The interesting part is not the engineering. It is whose imagination this unlocks, and which kid keeps writing because their last story became a real book.